Lip sync AI has rapidly transformed how content creators, including YouTube, and digital marketers build attractive content and reach global audiences. But in case you have tried to do the same, you might have realized that it’s not that easy to mimic the lip syncing.

But here is a catch – AI tools that lips sync translated videos allow for multilingual synchronization right from the start. This not just improve the overall quality, but make it truly feel like you.

Read more to learn about the 12 tips for better lip sync AI videos.

Key Takeaways

- Lip sync AI works best when both the audio and video inputs are lined up to create a strong impact.

- Small human elements, such as small pauses and natural speech patterns, make the video feel very real.

- Regular testing and adjustments are the key to achieving uniform and natural results.

Lip sync AI markedly improves educational videos and viral social media videos with a natural and engaging experience․

Minor flaws distract viewers within seconds‚ and mastering these optimization techniques is vital for smooth playback and engaging content․

High-quality content allows links between different media‚ bringing static images to life using an AI avatar generator.

Achieving an accurate lip sync depends on how precisely lip sync AI adapts audio phonemes to mouth movements․

Deep learning models require clear‚ high-fidelity input audio to predict exact mouth movements for each syllable․

The best images are frontal and evenly lit to minimize errors in jaw and lip tracking․

The focus on maintaining stable head positions means finer changes at higher levels can be made that result in a less mechanical result.

Clean audio samples are vital for accurate lip sync․

The audio tracks will be set to the same volume‚ background noise will be removed‚ and phonemes will be detected․

Add natural pauses‚ filler words (e․g․‚ “um”)‚ and variation in speed and rhythms to create human-like voices.

Key Steps for Audio Optimization:

Refined audio prevents incorrect timings, especially for challenging sounds like plosives or fricatives.

This preparation alone can improve sync accuracy considerably.

High frame rates and resolution are needed to capture slow facial movements․

4K video with even lighting of the face aids tracking algorithms․

To fool motion detection‚ avoid adverse angles or quick head movements․

In group scenes‚ mask out the protagonist’s dialogue to provide the AI with clean training data‚ which allows smoother animation generation.

Then for sync‚ use micro-expressions like smiles and frowns to encode emotional tones‚ coordinate voice profiles with personalities‚ and create switches between phonemes for a natural bounce between different sounds․

Pushing for as many passes and sync points as possible to line up the audio waveforms to the music of the videos made them famous․

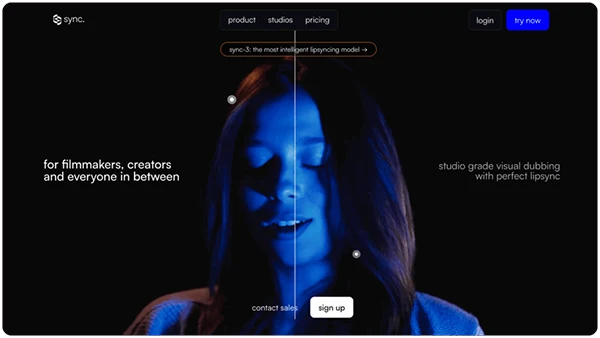

One of the most advanced casting systems‚ Sync.so.

In translation‚ both source and target languages must have the same timing‚ and the word choice should be of a similar duration․

Keeping the neck and lower face movement streamlined while fixing the upper face improves believability.

Common Pitfalls and Fixes:

This system supports dozens of languages, ideal for international campaigns.

For real-time streaming applications and datasets with occluded faces‚ low-latency systems (<50 ms) and datasets with partially hidden faces are used․

For multi-speaker systems‚ the voice separation and sync tracks are used․

Temporal filtering and other techniques are used to prevent illusions of fast actions and ensure reliability (live events‚ avatars‚ etc․).

After generation‚ check for editing errors like jittery mouths or uneven blobs․

Re-process with higher bitrates․

Use mono audio output for a single speaker voice․

Testers would say lines slowly‚ back-to-back‚ to test transitions․

Generative models predict multiple phonemes in order of likelihood‚ with the following updates achieving >95% sync accuracy.

In short videos‚ lip sync AI animates avatars for trends or advertisements․

In games and learning‚ virtual characters lip sync exact syllable sounds to their mouths․

Marketers scale targeted messaging‚ bringing together custom voices with branded visuals․

Use of props or animals for effect․

A trend is an emotional avatar‚ which reads sentiment to connect with a respondent.

All projects use the same audio specifications and the same video resolutions‚ allowing them to be handled as batches using cloud-based resources․

Frame analysis offers tracking metrics, including an update time of under 50ms․

By testing devices‚ playback irregularities can be cancelled when high output is required.

If you experience delays in sync‚ it’s likely because the frame rates don’t match; sync audio and video․

Mechanical noise is caused by low-volume sources; increase volume․

Fast speech can benefit from gradual slowdowns.

Quick Fixes Table

| Issue | Cause | Solution |

| Issue | Cause | Solution |

| Lip misalignment | Noisy audio | Noise reduction + uniformity |

| Jittery movement | Low-res video | Upgrade to 4K/60fps |

| Unnatural pacing | Literal translations | Adapt phrasing naturally |

| Occlusion errors | Poor angles | Use front-three-quarter views |

Newer models deploy augmented reality-based overlays‚ multi-accent training‚ and full-face gesture friendly mood detection‚ making them less distinct from images of real people․

Multimodal features are a common input for these models.

Quantify sync scores and watch time․

Aim for natural staying power․

A/B test variations to different audiences․

Mastery is accomplished through a feedback enhancement loop․

These 12 strategies offer advanced lip sync AI from preparation to polishing stages․

Use them step by step to create videos that connect with audiences and cut through the noise.

In the end, the real lip sync does not come from just using a great tool – it is a mix of advanced features and the way you use it. Clean audio, effective adjustments – all make a huge difference.

Furthermore, better lighting and video quality boost the overall structure. When all these small parts are combined into one, the end result starts to feel less like being created by AI and more like human efforts.

Over time, these small improvements pile up and completely transform the quality of the videos.

Yes, a huge difference. Clear sound instructions allow AI to easily understand how things are matching and what changes need to be made.

The videos that are front-facing are the ones that work best with the AI combination.

Verify if the audio and video frame rates are in tune with each other. Even some changes can create a huge difference.