As artificial intelligence has integrated into everyday content creation, differentiating whether some text is AI-written or human-generated has become a new challenge. To identify the same while saving time, AI-detecting tools were built.

But in 2026, spotting simple patterns and looking for repetitive language is not enough. For this, modern AI tools such as Undetectable.ai are used to analyze the pattern and tone and identify machine-generated content with higher precision.

This article shares more about AI detectors—what technologies work behind them and how AI-generated text detection has evolved further in 2026.

An AI detector is a tool that is used to analyze written content to determine whether it is written by a human or generated by AI models such as ChatGPT. Rather than looking for copied text, it looks for common patterns that instantly detect AI content. This not only saves time but also improves accuracy.

At its core, its purpose can be highly related to plagiarism checkers; these tools just look for AI-written patterns.

Whether it is to maintain academic integrity, content authenticity, or transparency in a business, these tools can be used in various situations.

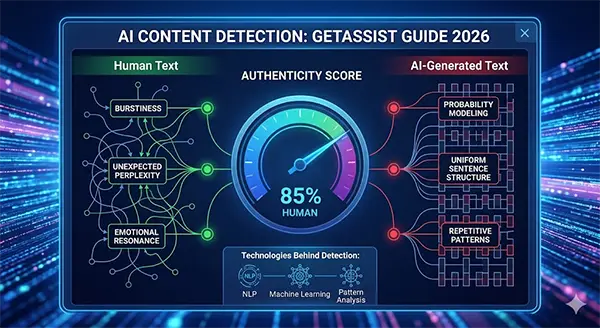

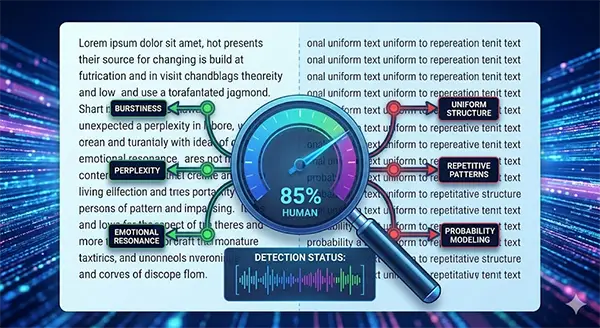

Behind the scenes, AI detectors use NLP (natural language processing) and machine learning algorithms to track the minor differences between human writings and AI content. When the text is scanned, the detector analyzes the following:

When mixed, these signals share a clear difference between AI and human content.

Modern technologies play a great role in making AI detection tools function properly. Let’s explore the core tech behind these:

Today’s AI detectors have a heavy dependency on ML systems that usually have a large collection of datasets inside them. These models precisely work on sentence variation, context patterns, and word distribution.

As the AI writing models are advancing with time, detection models also become aware of these advancements to remain competitive.

A few years back, models used to heavily depend on common repeated signals. But with time, these ways became unreliable, and advanced detectors started to evaluate rhythm, vocabulary, structural uniformity, and grammatical patterns. Instead of looking for separate sentences, modern tools consider the overall writing structure.

NLP (natural language processing) methods allow detectors to visualize the context in a clear way, instead of simply depending on keywords. Using NLP tools can evaluate whether a tone is followed, the topic is shared with the same depth, and what level of reasoning is provided.

While NLP is used to decode human input, NLG informs the output parts about algorithms of the chatbot. To understand this better, read more about Natural language generation on Wikipedia.

When both of these modern techniques work together, it becomes capable to skip analyzing false patterns that traditional detection tools used to create and provide more precise results.

As AI-generated text becomes tough for humans to identify and detect, AI detection tools have become necessary to use. Below are some of its major benefits:

While AI detection tools have various advantages to consider, they also have some limitations on the flip side. Here are some of its limitations and challenges:

AI detectors have become essential tools to maintain transparency, as AI-generated content has become very common in schools, universities, and workplaces. While these tools are here to provide results about whether a text has AI components or not, they are not completely reliable. As they may also make mistakes.

By adapting to how AI detectors work, their challenges, and contexts, one can make better decisions and support workplaces where AI is used in a responsible way.

Yes, many of the tools are free. But most of the tools have a set limit of words, or one needs to take a premium to use them.

No, most of the tools work best in the English language because their training data and datasets are huge. So, accuracy rises in it.

No, it’s simply that most organizations don’t prefer it. The major reason behind this is to maintain transparency.