In the last few years, generative AI has become so advanced and accessible that social media platforms are filled with fake images generated by these generative AI tools.

Meta says that they will combat the problem of fake images on their platforms including Facebook, Instagram, and Threads.

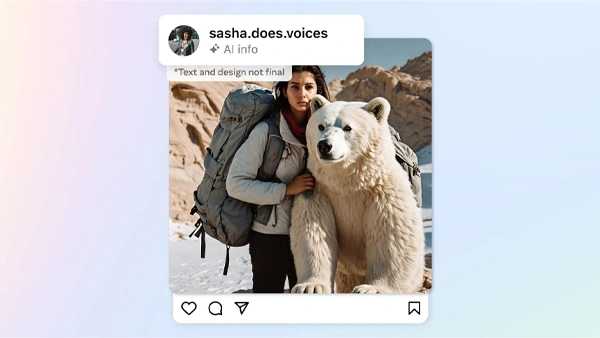

On their platforms, Meta already labels photorealistic images created by its AI tool with ‘Imagined with AI’ labels. Now, they want to do the same for images created with other generative AI services.

The images created with the tool from Meta include visible markers, invisible watermarks, and metadata embedded within the image files to help other platforms determine them as AI-generated images.

Now, in a blog by Nick Clegg, President of Global Affairs, Meta suggests that the company will do the same for every AI-generated image posted on their platforms.

Nick further emphasizes that most tech companies in the industry are working to develop common standards for identifying it through forums like Partnership on AI (PAI). And the invisible watermark and metadata in their images are in line with PAI’s best practices.

They are also building tools to identify invisible markers at scale so images from OpenAI, Google, Microsoft, Adobe, and Midjourney can be identified and labeled when posted to a Meta platform.

Clegg writes “People are often coming across AI-generated content for the first time and our users have told us they appreciate transparency around this new technology. So, it’s important that we help people know when photorealistic content they’re seeing has been created using AI,”

He also said that digitally altered images or videos that “create a particularly high risk of materially deceiving the public on a matter of importance” will get a prominent label from the platform.

More companies are pushing for ways to identify misleading AI-generated content in the tech industry.